Software Engineering Is Not Dying, It's Evolving. And You Better Catch Up!

A Brief History of Getting It Wrong

Every decade or so, the tech industry holds a little funeral for the software engineer.

In the 1980s, it was CASE tools. Computer-Aided Software Engineering. The pitch: visual environments that would let business analysts build systems without touching code. No more cryptic developers hoarding arcane knowledge. Just drag some boxes, connect some arrows, ship the thing. The industry spent billions. It did not work. The developers were fine. Slightly smug, actually.

In the 2000s, it was offshore outsourcing. Why pay a Toronto engineer $90,000 when you could pay an equally capable team in Bangalore $15,000? Because, it turned out, time zones are brutal and "cheaper" has a funny habit of becoming "catastrophically expensive" two years later when you're debugging a system nobody on your team understands. The developers survived that too.

Then came no-code. Low-code. Webflow, Bubble, Salesforce, and roughly four hundred other platforms promising to eliminate the programmer and democratize creation. Those platforms are genuinely useful. For certain things. But the engineers are still here, still getting paid, now also building on top of the no-code platforms.

Now it's AI. And I get why this one feels different. Because it is, a little. The tools are genuinely impressive in ways the previous eulogies never were. GitHub Copilot writes real code. Claude solves real debugging problems. Cursor finishes your sentences before you've decided what the sentence is about.

But here's what hasn't changed: the people declaring software engineering dead are, once again, confusing typing with thinking.

The Part Everyone Keeps Getting Wrong

Let's talk about what software engineers actually do, because the discourse around AI keeps skipping this part.

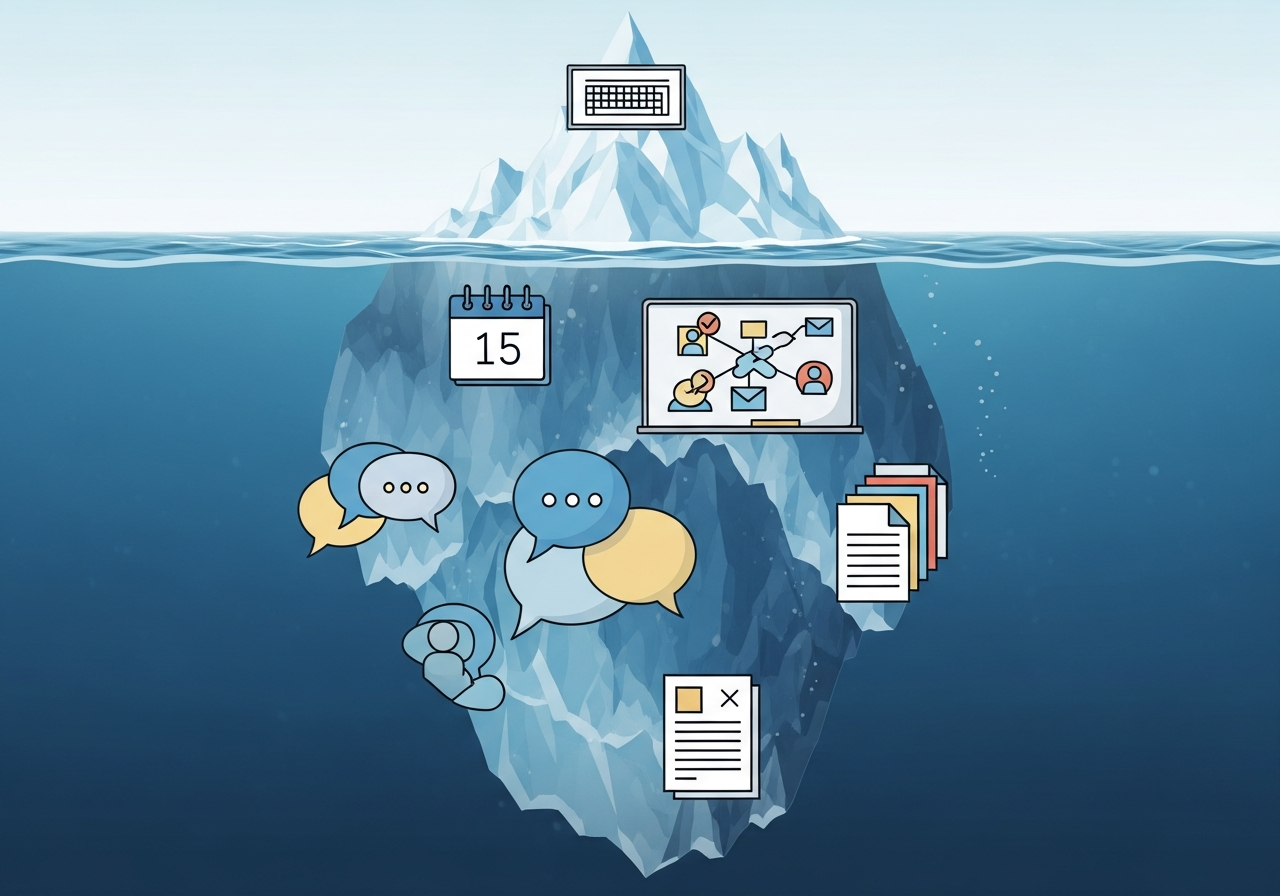

Coding is typing. It's the act of expressing a known solution in a language a machine can run. It's syntax and structure and figuring out why the loop is off by one. Software engineering is everything else. It's the two-hour meeting where you ask increasingly specific questions until you understand what the client actually needs, which is rarely what they asked for. It's reading the proposed architecture and catching the design decision that will cause a production outage six months from now. It's the conversation where you tell a product manager that the feature they want is technically possible, will take three months, and will immediately be used by two people, and that maybe there's a better way to solve the underlying problem.

It's judgment. Communication. Constraint navigation. The unglamorous, deeply human work of turning ambiguous problems into things a computer can reliably execute.

Studies that have actually tracked how engineers spend their time put coding at roughly 20% of working hours. Twenty. The rest is meetings, code review, planning, debugging (which is mostly reading and thinking, not writing), documentation, and an uncomfortable amount of Slack messages that should have been a ten-minute conversation.

AI is genuinely impressive at the 20%.

For the 80%, it is, at best, a slightly overconfident intern. Useful. Needs supervision.

We Have Seen This Movie Before

The "vibe coding" trend, which is the practice of prompting an AI and shipping whatever it produces without particularly understanding it, is not a new idea. It's CASE tools with better marketing.

The engineers who leaned hardest into CASE tools in the 1980s produced bloated, unmaintainable systems. The abstraction hid complexity instead of managing it. You still had to understand what was happening underneath. You just now had to understand it while debugging code you didn't write and couldn't fully read.

This pattern has repeated itself reliably throughout software history. Every time the industry invents a tool that raises the abstraction level, there is a chorus of voices announcing the end of programmers. And every time, what actually happens is that the new abstraction makes it possible to build more things, which creates more problems to solve, which creates more demand for engineers.

Assembly to C. C to higher-level languages. Bare metal to cloud. Manual deployments to CI/CD. Each transition compressed certain roles and expanded others. The engineers who understood the new abstraction layer and worked competently within it? They did fine. The ones who ignored it and kept doing things the old way? Less fine.

AI is the next layer. That's it. That's the whole story.

The job is not disappearing. The job is moving up a level, same as it always has, and asking whether you're coming with it.

What The Research Actually Says

Here is the thing both AI pessimists and AI boosters manage to miss: the actual research on what AI agents can do in practice lands somewhere firmly in the middle of the discourse.

The Anthropic data on agentic coding shows real capability. AI can complete complex, multi-step engineering tasks. It can refactor systems, write tests, debug across files, and do it faster than most humans doing the same work manually. The 2026 Agentic Coding Trends Report shows AI-assisted engineers are measurably more productive than engineers without it.

Also from the same research: AI agents fall apart on ambiguous problem definitions. They struggle with novel constraints. They confidently produce wrong answers when the question is slightly outside their training distribution. They will write you a beautiful login system with a subtle security flaw and no particular indication that anything is wrong.

In plain language: AI is great at executing inside a clear frame. It is unreliable at deciding what the frame should be.

That framing problem is 80% of real engineering work. Someone has to understand the system well enough to specify the problem correctly. Someone has to review the output and know when it's subtly wrong. Someone has to catch the architectural mistake before it ships to production and quietly costs the company a year of technical debt. That someone is you. A well-prompted, AI-augmented version of you, hopefully.

The research on generative AI and empirical software engineering calls this a paradigm shift, and that framing is right. But paradigm shifts in software have never eliminated the engineers. They've redefined what engineering means.

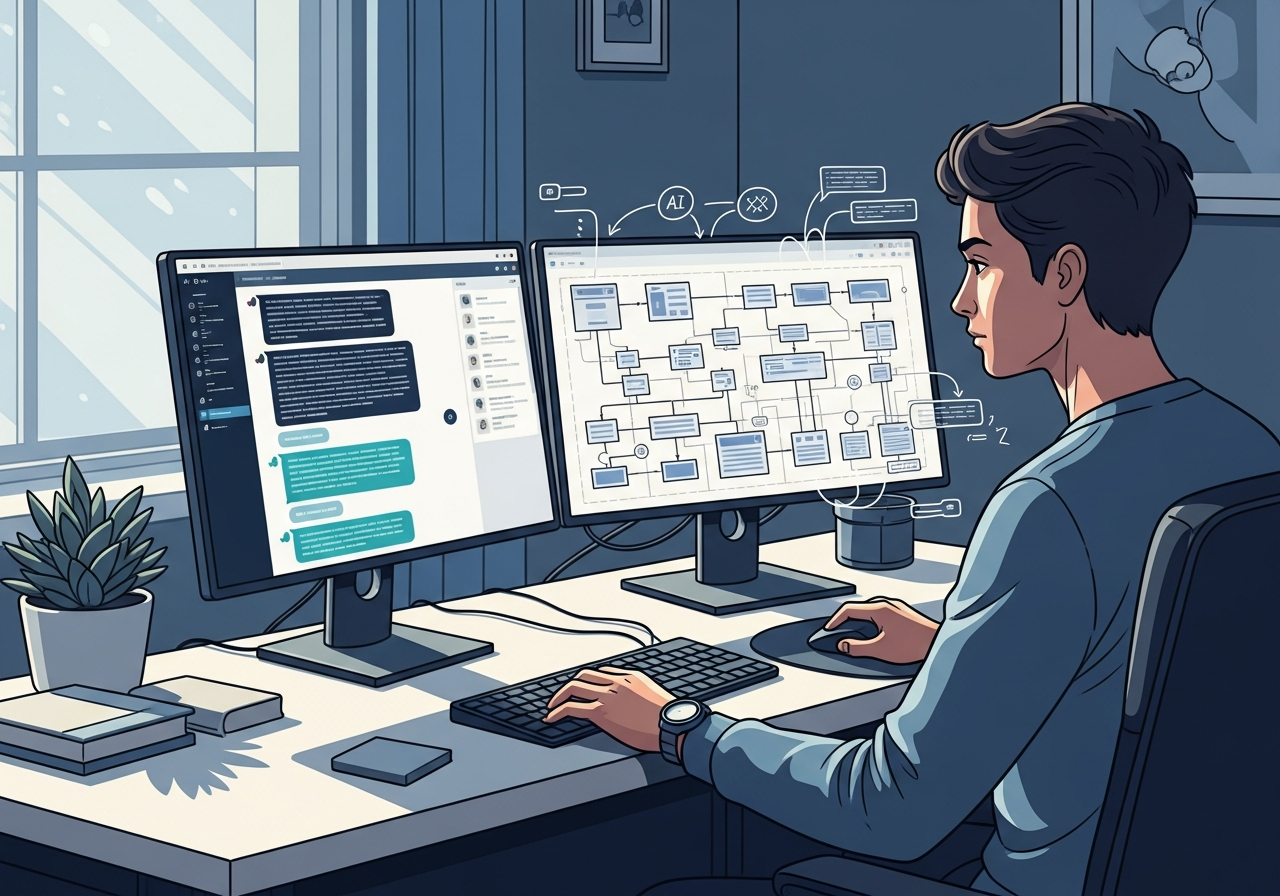

Right now, it means directing AI effectively. Not just using it casually.

There is a real difference between an engineer who types "write me a login system" and an engineer who specifies the session management approach, the edge case handling, the security constraints, and the test requirements before the AI writes a line. The second engineer is using AI as a force multiplier. The first is using it as a slot machine and calling the result shipping.

What To Actually Do

Stop watching and start using.

If you are a working software engineer who is not using AI tools in your daily workflow, you are behind. Not in a hand-wavy "the future is coming" way. Right now, today, your colleague who adopted Cursor three months ago is closing the same tickets you are closing, in less time, with more bandwidth left for the interesting work.

GitHub Copilot, Cursor, Claude, Codeium. Pick one. Use it on real work. The goal is not to be impressed. The goal is to figure out where it makes you faster and where it fails you so you can build a workflow around it.

Engineers who refuse AI tools on principle have made a career decision. They may not know they made it yet.

Double down on the 80%.

If AI is compressing the value of typing, the response is to become exceptional at the parts AI cannot do.

Communication. The ability to extract the real problem from a client who describes the wrong one. The ability to explain why a technically feasible thing is still a bad idea. The ability to write documentation that a human being can actually follow.

System design. Looking at an architecture and seeing where it fails. Understanding the trade-offs at scale. Making the call that the elegant solution is going to be a nightmare to operate at 3 AM and proposing something boring and reliable instead.

Product sense. Knowing when to build the thing and when to question whether the thing is the right thing to build.

None of that is in the AI's wheelhouse yet. All of it is the difference between an engineer who is increasingly hard to replace and one whose role is quietly getting restructured.

Learn to direct, not just prompt.

Most people use AI tools the way they use Google. Vague query, skim the result, move on. That produces mediocre output and the impression that AI isn't that useful.

The engineers getting real leverage out of AI treat it like a very fast, very literal junior developer. They specify clearly. They review carefully. They catch the mistakes because they understood the problem well enough to recognize when the solution is wrong.

That is a learnable skill. Go learn it.

Read the market, not the takes.

The Indeed Hiring Lab data from January 2026 is not subtle: job postings mentioning AI are growing. Ravio's compensation data shows engineers with AI skills commanding salary premiums over peers without them.

What is also true: the "junior developer who implements pre-specified tickets without broader context" role is getting thinner. The work that used to take a team of five is getting done by a team of three who use AI aggressively.

This is not a reason to panic. It is a reason to be honest about which profile you currently are, and which one you want to be.

If You're Building Things In Canada

Canada produces world-class engineering talent and then consistently fails to build the compensation structures to keep it. The Waterloo pipeline exports engineers to Google, Stripe, and Shopify's San Francisco offices at rates that should embarrass every Bay Street bank that still charges $16.95 a month for a chequing account.

AI does not fix that. But it does change the math for Canadian engineers who want to stay in Canada. An engineer operating with real AI leverage does the output of 1.5 engineers. That makes the Canadian compensation gap more survivable and makes remote contracts with American companies significantly more achievable. That is actual good news, if you're willing to do the work to capture it.

The eulogy is wrong again. But unlike every previous version, this one has a footnote: the engineers who read it, nodded knowingly, and then changed nothing are going to find out why it was worth paying attention to.

Free guide

Get the TFSA vs RRSP vs FHSA decision guide.

Join for practical Canadian investing guides, calculators, and plain-English account strategy updates. No spam, unsubscribe any time.