I Work at Meta. Here Are the 3 Skills That Actually Matter for Software Engineers Right Now.

Most articles about the top skills for software engineers are written by people who read other articles about the top skills for software engineers. I am writing this from inside one of the companies actively reshaping what engineering looks like. The gap between engineers who are thriving and those who are falling behind is becoming very visible. It has almost nothing to do with how well they write code.

The Problem: Most Engineers Are Using AI Wrong

Most engineers treated AI adoption like a feature upgrade. They added Copilot to VS Code, asked the chatbot to fix bugs when stuck, and called it a day. That is using AI as a better Stack Overflow. It is leaving about 90% of the value on the table.

Here are the three things that actually separate engineers who are pulling ahead.

Skill 1: Adaptability. There Are New Tools Every Single Week

This is not an exaggeration. The pace of new tooling is relentless. In the last few months alone: OpenAI shipped Codex, a cloud-based coding agent that can work on your codebase autonomously. Anthropic released Claude Code, a CLI tool that lets you run Claude directly in your terminal against your actual project files. Agent frameworks are evolving so fast that what was the best setup six months ago is already outdated.

Adaptability is not a personality trait. It is a discipline. It looks like this: you hear about a new tool, you try it that same week, you run it on a real task, and you decide whether it earns a spot in your workflow. Not in six months once it has more reviews. This week.

The engineers falling behind are the ones waiting for a tool to be fully mature and proven before they touch it. In this environment, that means you are always six months behind the people who tried it on day one. That gap compounds.

You do not need to master everything. You need to stay close enough to know what exists, what it does, and whether it applies to your work. A one-hour experiment every week is the difference between leading and catching up.

Skill 2: Prompting Is Precision Engineering. Here Is the Difference

"Fix the login bug" is not a prompt. It is a prayer.

Here is what most engineers actually send to an AI when they are stuck. Then they get a generic answer, decide it is not useful, and go back to doing it manually. The problem is not the AI. The problem is the input.

Here is what a real prompt looks like for the same situation:

Context: I have a Next.js app with JWT authentication. The login function calls /api/auth/login, receives a token, and stores it in localStorage. Problem: After a successful login the user is redirected to /dashboard but the auth state is undefined on the first render, causing a flash of the unauthenticated view before correcting. Expected: The auth state should be populated before the first render. Constraints: No Redux, using React Context. Steps: 1) Identify where the auth state initialization happens. 2) Determine if the token is being read before the component mounts. 3) Suggest the minimal change to fix the race condition. Verification: After the fix, refreshing /dashboard should not show the unauthenticated state at any point.

That prompt gives the AI everything it needs to give you something useful. Context, problem, expected behavior, constraints, a step-by-step approach, and a way to verify the answer. The output quality scales directly with the precision of the input. This is engineering, not chatting.

Treat every prompt like a function signature. Define your inputs, define your expected output, define your constraints. The AI is not magic. it is a very capable system that performs exactly as well as the specification it receives.

Skill 3: Stop Working on One Problem at a Time

This is the shift most engineers have not made yet, and it is the biggest one.

The old model: one problem, one session, one output. You open the chat, describe the issue, get an answer, apply it, close it, move to the next thing. AI as a faster rubber duck.

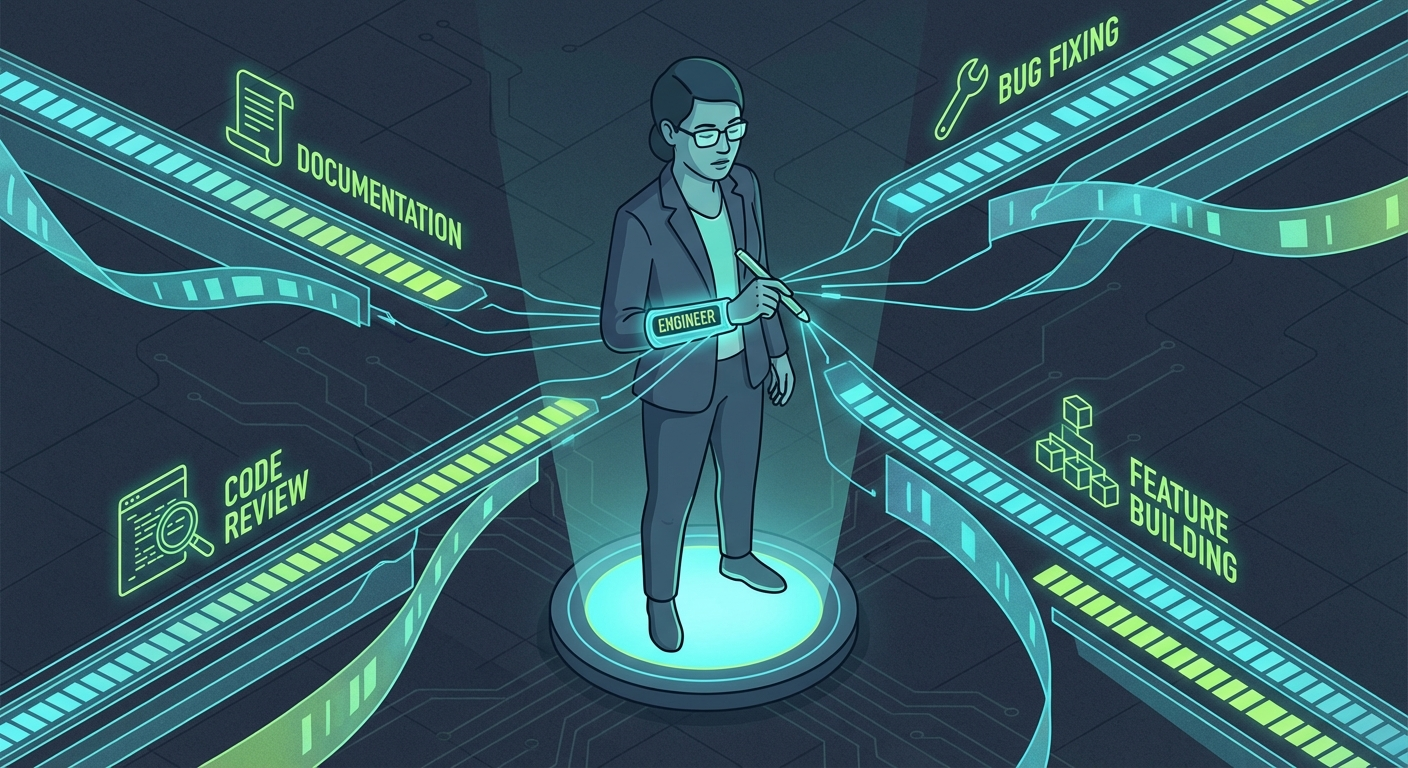

The new model: you build agents that work in parallel while you work on something else entirely. You are not the one doing the work on each thread. You are the one who architected the system and reviews the output.

Concrete examples of what this actually looks like:

An agent that triages your GitHub issues every morning. It reads new issues, classifies them by severity and component, assigns labels, and drafts an initial response. You review and approve. What used to take 45 minutes of context-switching happens while you are in your first meeting.

An agent working on Bug A while you work on Bug B. You hand off a well-scoped prompt for the first bug, let it run, and check its output when you are at a stopping point on the second. Two problems touched in the same time block.

An agent that scans your entire codebase overnight and writes unit tests for every function that lacks coverage. You defined the pattern once: what a good test looks like in your project, which testing library you use, what edge cases to cover. The agent runs it across hundreds of functions. You wake up to a pull request. You review. Done.

This is not about writing clever scripts. It is about thinking of AI as infrastructure you design, not a tool you use reactively. Instead of writing a wiki manually, you write a prompt that generates the wiki from your codebase and PRs. Instead of context-switching between tasks, you delegate the parts that can be parallelized and stay focused on the parts that actually need you.

These are not predictions. This is what is happening right now inside companies moving fast on AI. The engineers who internalize all three of these. who stay close to new tooling, who engineer their prompts, and who build parallel systems instead of sequential workflows. are not slightly more productive. They are operating at a different level entirely.

The gap is already opening. It is just not evenly distributed yet.

Free guide

Get the TFSA vs RRSP vs FHSA decision guide.

Join for practical Canadian investing guides, calculators, and plain-English account strategy updates. No spam, unsubscribe any time.