The Coding Interview Is Finally Dying. Here's What's Killing It.

ChatGPT can pass the LeetCode medium you spent six months memorizing. It does it in about four seconds, without the existential dread, and with better variable names than most people use in production. Companies have known this for a while. Most of them kept running the same interviews anyway.

That is changing.

The Problem: LeetCode Was Always Measuring the Wrong Thing

The ritual was this: spend three to six months memorizing algorithmic patterns you will never encounter in a real job. Sliding window problems. Binary tree inversions. Dynamic programming variations that belong in a university textbook, not a production codebase. Then sit in a room under artificial time pressure and try to recall them from memory while someone watches your keyboard.

What did this measure? Pattern recognition. Short-term memory. Access to time for interview prep, which immediately disadvantages anyone with a family, a second job, or caregiving responsibilities. Academic background. How well you perform under a very specific kind of artificial stress.

What it did not measure: Can you debug a production incident at 2am? Can you architect a system that does not fall apart at scale? Can you read a piece of existing code and identify what is going to cause a security breach in six months? Can you work with other humans?

The LeetCode interview was always a crude filter that the industry adopted because it was easy to standardize and hard to argue with. Then AI made the whole thing impossible to defend.

The Insight: The New Interview Tests Judgment, Not Output

In October 2025, Meta started piloting a new interview format. One of the two onsite coding rounds now runs in a specialized CoderPad environment with an AI assistant built in. Candidates can use it. The interviewer is not watching whether you can write a binary search from scratch. They are watching how you think.

What do you prompt the AI to do? How do you verify what it gives you back? Do you catch the edge case it missed? Do you notice when it gives you a solution that technically works but would be a security problem the moment it hits production?

Amazon has signalled similar moves internally. The framing: less LeetCode, more real-world tasks with AI tools available. The question is no longer can you produce code. Any mid-tier AI can produce code. The question is can you evaluate it.

This is the shift. The skill is judgment. Can you tell a good solution from a fragile one? Can you spot an insecure one from a working one? Can you decompose a problem clearly enough to actually direct AI toward something useful, rather than generating plausible-looking garbage at scale?

The bar is not lower. It is finally measuring the right thing.

What To Actually Do

The skills that will get you hired at a serious company in 2026 are not the ones that got people hired in 2019.

System design is now the baseline, not the ceiling. If you can reason clearly about trade-offs at scale, distributed systems, data consistency, latency versus throughput, failure modes, you are demonstrating something an AI cannot fake for you in real time. Practice designing real systems end to end. Not toy examples. Think: how would I build a notification system that handles 10 million users, and actually reason through it out loud.

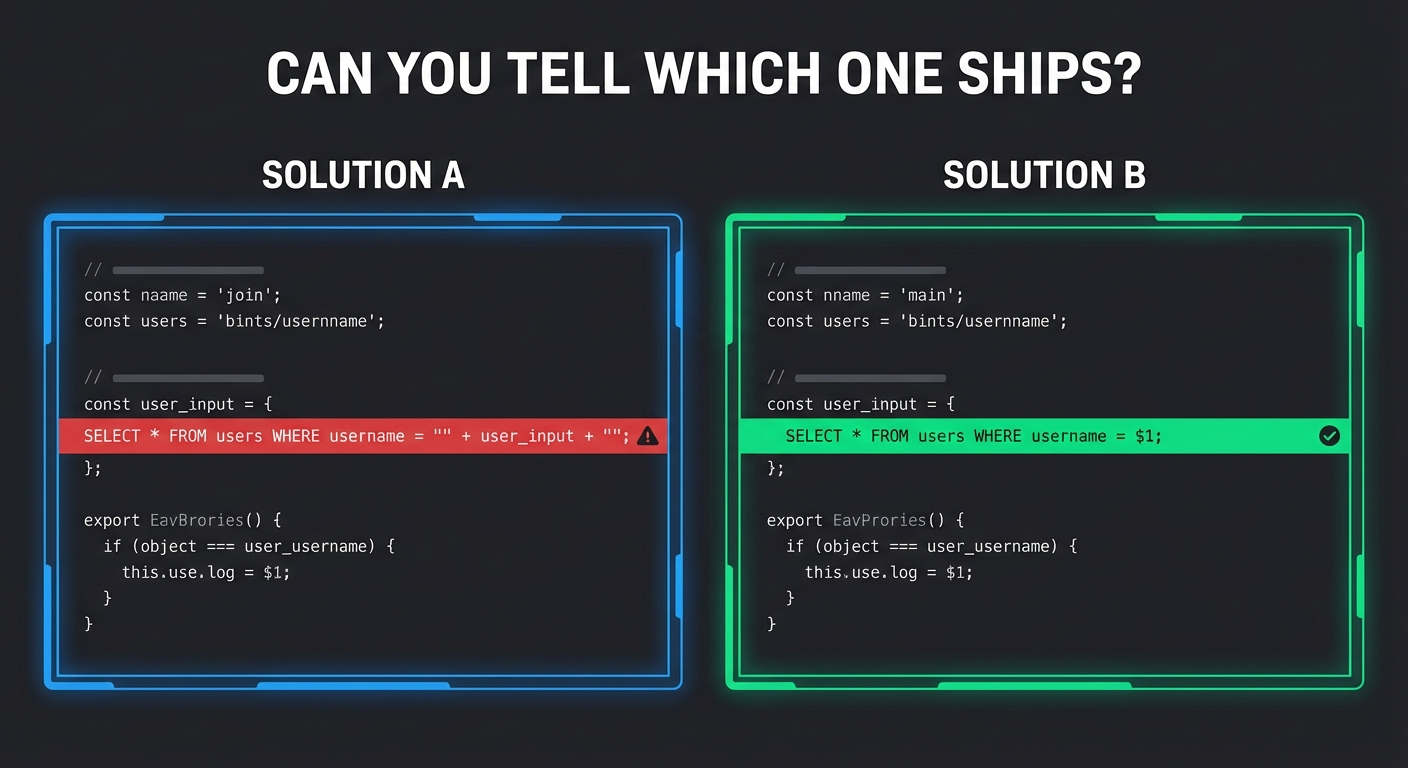

Develop a security instinct. The emerging interview format will put two working solutions in front of you and ask which one you would ship. One of them has a SQL injection vector, or logs sensitive data in plaintext, or has a race condition under load. The candidates who catch it immediately are the ones who have been reading security post-mortems and CVEs, not grinding binary trees. OWASP Top 10 is a starting point. Learn to read code like an attacker reads it.

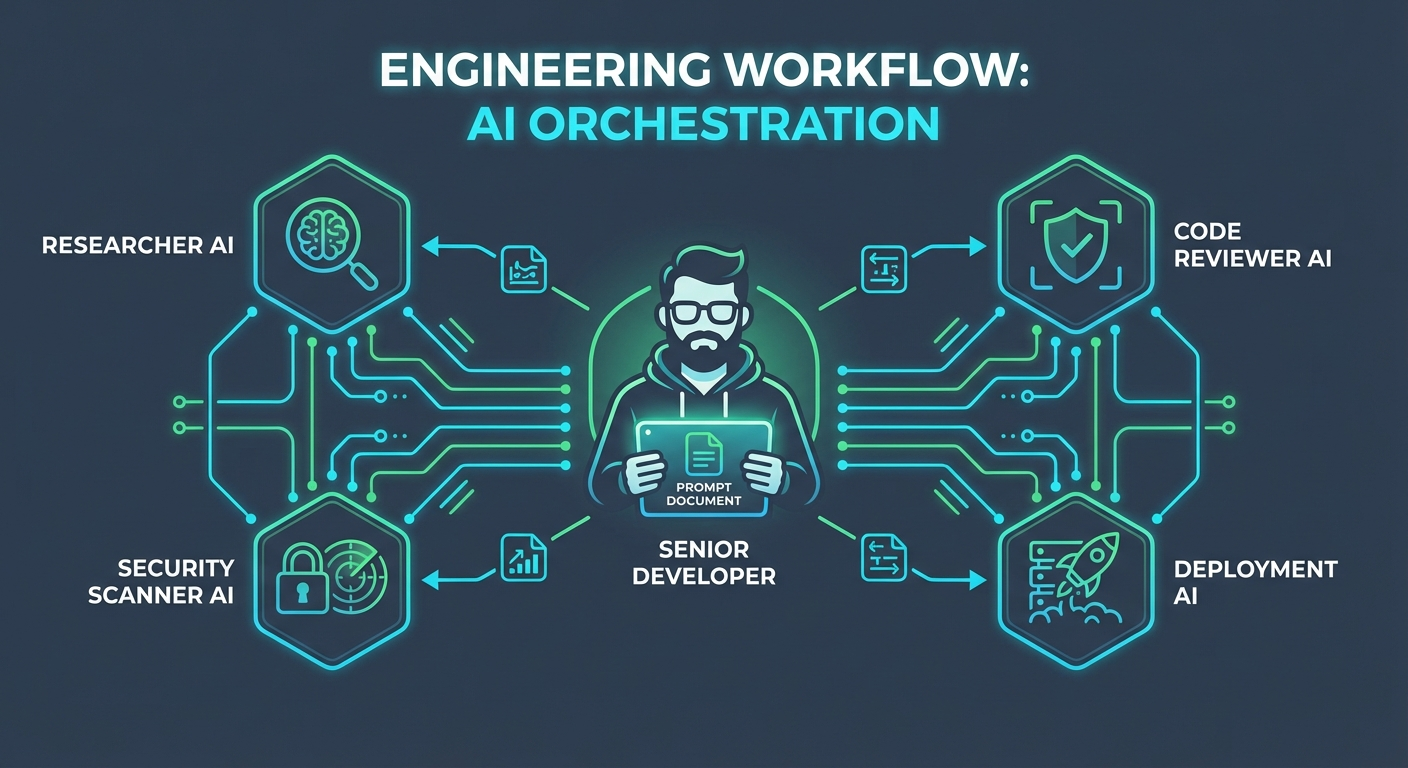

Learn to build with agents, not just use AI tools. This is the new technical literacy, and most engineers are not there yet. The real skill is not writing a prompt to summarize text. It is designing a workflow where multiple AI components hand off tasks reliably. Can you define the right tools, the right context, the right guardrails? Can you debug an agent that is looping or hallucinating? Companies hiring for any AI-adjacent role in 2026 are looking for engineers who are comfortable inside agentic systems, not just people who open ChatGPT when they get stuck.

Prompt engineering is a real skill, and it is learnable. The difference between a useful prompt and a useless one is not magic. It is specificity, context, constraints, and knowing what the model does and does not reliably understand. Practice writing prompts that produce consistently usable output. Practice breaking complex tasks into sub-tasks that a model can execute without drifting. This is not soft skills. It is engineering.

Code review over code production. The interview format that cannot be cheated with AI is showing a candidate a piece of AI-generated code and asking them to review it. Can you identify the performance problem? The security gap? The part that will be impossible to maintain in twelve months? You either have the taste or you do not. Build it by reading other people's code, open source projects, post-mortems, pull request reviews, not by solving algorithm puzzles in isolation.

The engineers who are going to thrive in this environment are not the ones who memorized the most patterns. They are the ones who can sit down with whatever tools exist and build something that works, is secure, and will not embarrass them in a year. That has always been the job. The interview is finally catching up.

Free guide

Get the TFSA vs RRSP vs FHSA decision guide.

Join for practical Canadian investing guides, calculators, and plain-English account strategy updates. No spam, unsubscribe any time.